[Note: If you have a degree in maths or statistics or economics, some of this might be ill informed. Forgive me, I’m a political science graduate just muddling through! Similarly, I’m not too well-versed in decision theory so much of this is likely well-trodden ground. Future posts also won’t be so maths heavy.]

If you want to figure out whether something is a good bet, the recommended method is to determine the ‘Expected Value’ of the bet. Suppose, for instance, that someone gives you odds of 3/1 (25%) that a fair coin comes up heads, and you decide to bet $10. This is a good bet - there is a 50% chance that you win 30$, and a 50% chance that you lose $10. The Expected Value of your bet, then, is (0.5 * 30) + (0.5 * -10), which is equal to $10, meaning that if you took the bet lots and lots of times, your average winning per bet would be very close to $10.

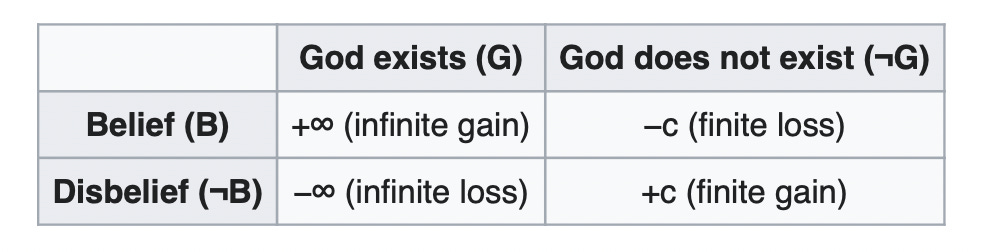

But sometimes using Expected Value leads us to weird results. Consider Pascal’s Wager, the argument that you ought to believe in God because the expected value is infinite. To quickly demonstrate this, note that the value of going to heaven is ∞, whereas the value of not believing in God assuming God does not exist is finite. Accordingly, you can use the table above to determine the expected value of believing in God versus not believing in God. Suppose that the probability that God exists is 0.000001%, and the loss incurred from believing in God if God does not exist is -1000 utils.

The Expected Value of believing in God, then, is:

(0.00000001 * ∞) + (0.99999999 * -1000) = ∞

The Expected Value of not believing in God is:

(0.00000001 * -∞) + (0.99999999 * 1000) = -∞

So, if you use Expected Value as a way of choosing to believe in God or not, you ought to be a believer. You may object that if God does exist, he isn’t likely allow someone into heaven because they believed in him on account of this calculation - but as long as you believe that the chance of this working is non-zero, the EV of believing in God is still infinite.

Another good example is Pascal’s Mugging, a fun thought experiment that demonstrates the same point. For this scenario, take it as given that the goal is only to maximise how much money you come away with. Suppose a man comes up to you and says that if you give him $100, he will give you $200 tomorrow. You assume that the actual chance of him coming to give you $200 tomorrow is about 0.001%, or some other similar tiny number. The EV calculation says that you shouldn’t give him your $100. Here’s the calculation: (0.00001 * 200) + (0.99999 * -100) = . -99.997.

But the implication here is that, if the amount of money that the mugger offers is large enough, you ought to give him your cash. Imagine that the mugger keeps doubling the amount that he claims he will give you, there must be some point at which the expected value of you giving him the money is positive. If he says he is from another dimension where money is infinite, and that he can give you vast, vast sums of money that you can’t even imagine (let’s say a trillion to the power of a trillion to the power of a trillion dollars, or more if need be) if you agree to give him the hundred dollars. While the chance of him telling the truth is extremely small, if we accept that it is not literally 0%, there must be some value that he can offer you at which point the EV of giving the money is positive.

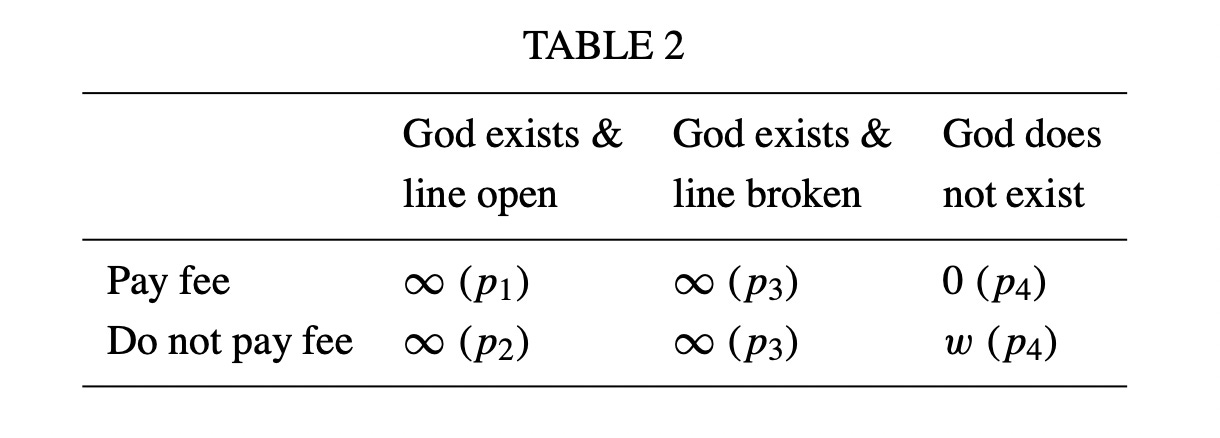

So, what should we do? It seems pretty obvious, at least to me, that you should not give the money to the mugger, regardless of how much he offers you. On the wager I’m less certain, because the costs of believing in God are fairly low (I imagine it might actually make you happier to believe in God). But we can make the wager irrational by using an example from Alex Tabarrok - suppose that I offer you a very small increase in your chance of getting to heaven if you give me all of the money you have and all of your possessions, because I have a direct phone line to God and can put a word in on your behalf. The expected value is still infinite - using the table above, you can plug in the numbers just like we did with the original Pascal’s wager example, as long as we assume that p1 is greater than p2, even if only by a tiny amount.

And if you think that Pascal’s wager is not so clear, this alternate version clearly this is a bad choice - even though the expected value of giving me all of your wealth is infinite, you still shouldn’t do it.

So, what do we do about this? I’m used to using EV and Expected Utility when thinking about what the rational thing to do is, and yet it seems there are scenarios where EV leads to us making a totally irrational decision. When you are working with extremely low probabilities (plus high costs if the more likely option eventuates), Expected Value falls apart. This is a problem for me personally - the reason I vote is almost totally based on a reasoning using Expected Value (borrowing an argument from David Shor): the chance of my vote making a difference to who is in government is incredibly small (almost below 1/10,000,000), but I do it because the total amount of utility caused by a change in government is huge, so the EV of voting is fairly high. But if we accept the idea that EV falls apart when the chance of an event actually occurring (such as the vote changing who is in government) is extremely low, EV doesn’t seem suitable for determining whether I ought to vote or not, and I’m left with (in my view, much less convincing) arguments that depend on civic duty.

This also seems to have implications for the Effective Altruist movement - should we incur some drastic cost now to very slightly decrease the chance of suffering to huge, huge numbers of people in the far future? The answer you give may be contingent on your view of Expected Value.

The lottery question is also one I find interesting (see the tweet above). I don’t play the lottery ever, and I’m not sure I would even if the EV was positive, although recently someone I discussed this with made the point that if lots of Effective Altruists played the lottery if and only if the EV was positive, it could be something worth doing. If you know more than I do about Expected Value or have some view on how we ought to think about Expected Value when the chances of an event occurring are incredibly small, let me know in the comments or message me on Twitter.

Math/econ here. On Pascal's mugger, our probability that the mugger will keep their promise is allowed to decrease with how much money they promise. So, there is no reason the expected return should increase with their promise.

For the lottery example, we need to distinguish between expected value and expected utility. Even if the expected return on the lottery were positive, almost everyone is risk-averse. Hence, why you said you would still probably not buy such a lottery ticket.

An effective altruist is already working directly with utilities in their calculations and might not need to make this distinction. However, perhaps the appropriate societal utility function (as a function of everyone's each individual utility) is somewhat risk averse.

Pascal's wager makes a lot of assumptions over uncertainty. As an atheist conditioning on my being wrong, I have no idea what God would want. If I had to guess, God would probably prefer a humanist over a selfish and disingenuous monkey dart at the wall. Similarly, I'm doubtful God would want me to subscribe on the selfish microscope chance you put in a good word.

> I’m used to using EV and Expected Utility when thinking about what the rational thing to do is [...]

I think the problem lies in *merely* using an EV calculation to determine what the rational decision is. Rather, a better procedure uses both an EV calculation *as well as* a bet-sizing calculation. For an example of a bet-sizing method, see the Kelly criterion <https://en.wikipedia.org/wiki/Kelly_criterion>. [1]

In essence, a decision to act involves a cost, i.e., an expenditure of some resources (often called the "bet size" in discussions about the Kelly strategy, which tend to involve examples about gambling). For example, in the mugger case, the cost is whatever dollar amount you are handing over to the mugger. In the Pascal's wager case, the cost is the utility loss one takes from adopting a Christian lifestyle (if there is such a utility loss). For voting, the cost is the time you take to read up on candidates and go vote.

The important insight is that if you want to maximize the growth of your wealth (or your cumulative net "utils"), there is an optimal expenditure size for each betting opportunity. Pay either more or less than the optimal amount and the expected value of the logarithm of your capital balance [2] goes *down*, even if the individual transaction has a positive expected value! The expected value of the logarithm of your capital balance can even become negative (your capital can go to zero) if you are too far off the optimal bet size.

A simple case demonstrating this would be a positive EV lottery ticket. In the United States, there are a few national lotteries which work on a system whereby if there is no winner during one time period, the prize money is rolled forward to the next time period, increasing the prize amount for the next time. In these types of lotteries, it is not too uncommon for there to be a string of no-winner periods, which eventually results in the prize amount being so large that lottery tickets have a positive EV. Suppose for example that the Mega Millions lottery has accumulated a prize of $1 billion USD, the cost of a ticket is $2, and the chance of winning is 1 in 250 million. The expected value of a ticket is thus $4, twice the cost of the ticket. Most asset classes have ROIs nowhere near 100%, so does this mean that if you have $2 to invest, it should go into buying a lottery ticket instead of into a stock index fund? If you merely compare the one-year-forward EV of any given stock index versus the 100% ROI from a lottery ticket, then the answer appears to be, yes, buy the lottery ticket.

But when you look at the situation with your Kelly strategy glasses on, you see that the optimal fraction of your wealth to bet, given the parameters above, is [1/250,000,000 - (1 - 1/250,000,000)/500,000,000] =

1/500,000,000. Let's say your net worth is $1 million USD. The optimal bet size is then 1 / 5th of a penny. (And if, like most people, you have less than $1 million USD, the optimal bet size is even less.) Since the minimum "investment" is a $2 ticket, and tickets cannot be bought in fractional quantities, after rounding to the nearest whole number, we see the rational number of tickets to buy is 0. That is, despite the huge expected ROI for "investing" in a lottery ticket, you should not buy one. A strategy of repeatedly buying lottery tickets under these circumstances produces a negative growth rate for your capital, which eventually leads to bankruptcy with 100% probability, in the limit of an infinite sequence of such transactions. (Though under other circumstances, such as a vastly greater initial wealth OR the ability to buy up a large fraction of the tickets at once, it could be rational to buy lottery tickets.)

Applying this reasoning to the mugger scenario: since the win probability is extremely low, the optimal bet size may well be so small that it isn't reasonable to give them as much as penny.

And of note to the Pascal's wager scenario: even the promise of an infinite reward does not necessarily increase the optimal bet size past a certain maximum. If there is a non-zero probability of losing the bet, there is no reward large enough to make the optimal bet size your entire capital. (However, I did once hear a well-known preacher say that the proper interpretation of Pascal's argument was that the rewards for Christian living *in this life* were such that it was worth doing even if we could not calculate what would happen to us in the next life. Under this interpretation, the "cost" of the bet is negative. That is, being a Christian is a free lunch, and it is rational to take the bet for that reason. The reader may judge the salience of this view for themselves.)

[1] For technical reasons, the Kelly criterion is a bit simplistic should only be applied in real life with caution. It is nevertheless a great way to introduce oneself to the underlying concept of bet sizing. See the wikipedia article for more discussion about some nuances.

[2] Or the logarithm of your net cumulative "utils".