Beware Interesting Ideas

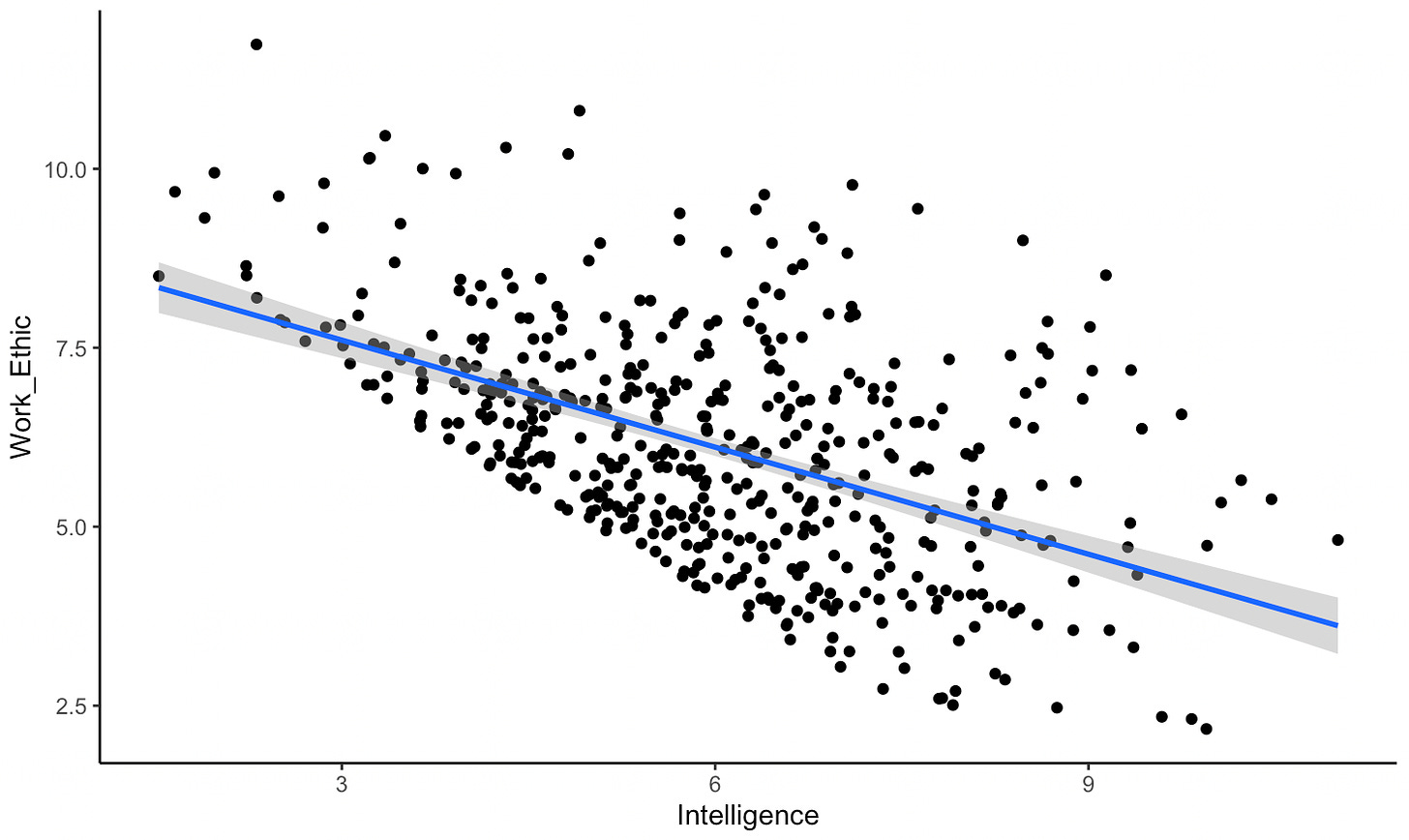

I have a hunch that may or may not be true. If someone mentions an idea to you, the more interesting it sounds, the less likely it is to be true. By ‘idea’, I sort of just mean any fact/theory that someone brings up in conversation that could be either true or false. There’s a fairly simple explanation of why I think my hunch is likely to be right. For an idea to be worth mentioning in conversation (or to be worth sharing on Twitter), it probably can’t be both uninteresting and untrue. A boring, false idea is unlikely to be the kind of thing someone brings up in conversation with you - there’s no reason for it to get brought up. If an idea is either interesting or true, there is a reason to mention it - true ideas are sometimes useful, and interesting ideas are, well, interesting. This is the same reason that among university students, IQ is negatively correlated with work ethic - you can’t get into university if you’re both stupid and lazy, but might well be able to get in if you’re either smart or hard-working, even if you aren’t both. I made a quick visualisation of this above - if you rate everyone’s intelligence and work ethic out of 10, and everyone with a combined score of under 10 is filtered out, the negative correlation appears (Collider Bias).

Think about some of the ideas that have gone viral in the past few years. Take ‘Power Posing’ - the idea that changing your posture for a few minutes can temporarily boost your testosterone and make you more confident. Power posing became insanely popular after a TED talk by Amy Cuddy got 64 million views, making it the second most viewed TED talk ever. It’s definitely an interesting idea - can I really improve my chance of getting a job by power posing for a few minutes before the interview? Well, no. The experiments didn’t replicate. There was a bit of back and forth between Cuddy and her critics - she initially claimed that while power posing didn’t actually change your testosterone levels, it did make people feel more powerful, which was still important. But even this seems unlikely to be true - a paper by Smith and Apicella didn’t even find that power posing had an effect on peoples’ feelings of power.

I’ve talked about other titbits I’ve heard thrown about before that I think are based on research that isn’t totally convincing. In this post on the ‘Gender Equality Paradox’, which claims that countries with higher levels of gender equality are likely to have fewer women in STEM, I note that newer research indicates that this is highly contingent on which measure of gender equality you use. If, like in the original research, you use the GGGI metric of gender equality, you find the negative correlation between number of female STEM students and gender equality. If you use the BIGI metric, you don’t find any correlation. The original claim hasn’t exactly been refuted, but I’ve heard lots of people talk about the supposed paradox and very few people mention how much debate there is about whether the paradox is actually a thing.

An interesting idea that I heard about during the vaccine roll-out was that if a government says that everyone who gets the vaccine is entered into a lottery, you get higher vaccine take-up - the main example given was the vaccine lottery in Ohio. The interesting part of this was the claim about why this happens. Rather than just being a typical case of people responding to incentives, the reasoning I heard was that the people who refuse to get the vaccine have a higher than average tendency to overestimate the chance of highly unlikely events (like dying or getting severe side-effects from the vaccine), and so they are especially likely to find lottery tickets to be an appealing reward for getting the vaccine. But according to Walkey et al., the actual lottery-incentive in Ohio did not result in increased rates of COVID-19 vaccinations. I assume that if the lottery-incentive didn’t have an effect, the explanation about the mechanism for the effect was bogus. A pity, because I actually found this one really interesting (and repeated it to a few friends).

Now, I don’t really think that going through a few ideas that sound interesting to me and turned out to be bullshit is particularly good evidence for my hunch that interesting ideas are less likely to be true. But it is the case that I heard about all of these ideas before I knew they were bullshit, and for the latter two, I had to dig around to figure out that they weren’t true. The collider bias explanation for why interesting ideas would be more likely to be untrue makes intuitive sense to me. Is there any research on this? Vosoughi et al. (2018) in Science claim that false stories spread faster on social media than true stories do. They write:

Falsehood diffused significantly farther, faster, deeper, and more broadly than the truth in all categories of information, and the effects were more pronounced for false political news than for false news about terrorism, natural disasters, science, urban legends, or financial information. We found that false news was more novel than true news, which suggests that people were more likely to share novel information.

This isn’t exactly the same claim that I’m making, but does hint that there might be something to what I’m saying. False ideas are more likely to be novel than true ideas, and spread faster. I guess there’s something else going on here - if you’re not so worried about what’s true, you can just worry about what’s interesting in your attempt to get published. If you’re a fraud, and don’t care about the ‘this information is actually true’ criterion, you can write whatever interesting stuff you want!